If you're using your browser's reader mode, refresh the page while in reader mode to load all content.

webapp that improves the office hours experience by optimizing tutor pairings

background

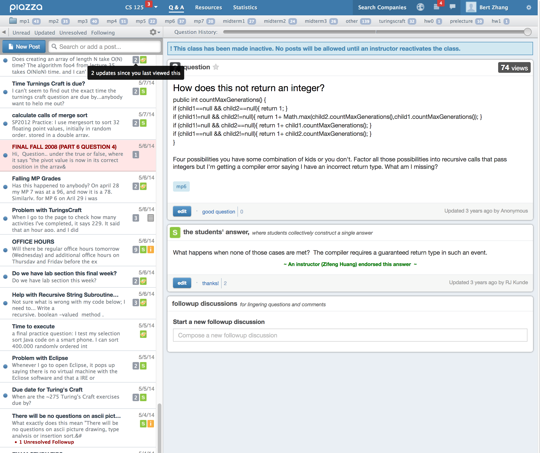

In the lower-level computer science courses at University of Illinois, there are multiple teaching assistants (TAs) with overlapping office hours for tutoring over 500 students per course. Students use a webapp to enter a course’s single queue to meet with TAs, who physically find the student within the building to help them. We knew from first- or secondhand experience that TAs and students had a general dissatisfaction with the system.

finding pain points

We interviewed students and TAs from various academic and cultural backgrounds, identifying pain points in the service:

- Students couldn’t specify which TA they wanted, hindering tutoring effectiveness

- TAs sometimes couldn’t physically find students and had to skip them

- Time apportioned to students sometimes felt inadequate or disproportionate

We also examined solutions other courses used, but found that they didn’t adequately address these issues without bringing in problems of their own.

interviews

goal

Uncover pain points in the service.

action

Interviewed TAs and students from various academic and cultural backgrounds.

insights

TAs and students alike lamented the lack of communication and feedback channels in the service, leaving everyone unsure and frustrated.

competitive analysis

goal

Determine if there is an easy win just by switching platforms.

action

Examined other in-person and online student assistance channels other courses used.

insights

Online solutions suffered from vague and slow communication. Traditional office hours helps with finding students required reserved space and limited comfort.

responding to pain points

Since the existing alternative solutions were lackluster, we opted to tweak the current system instead:

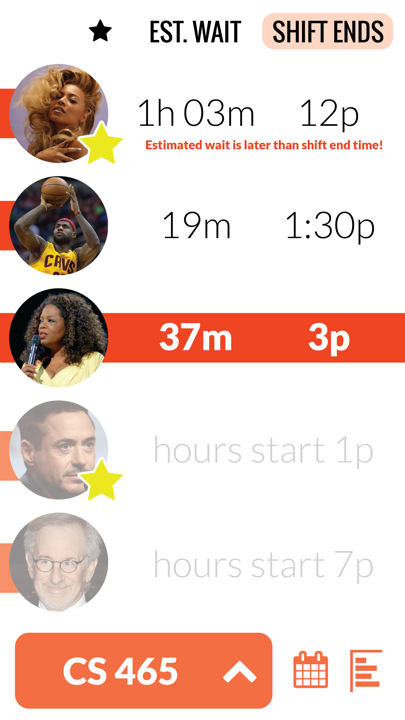

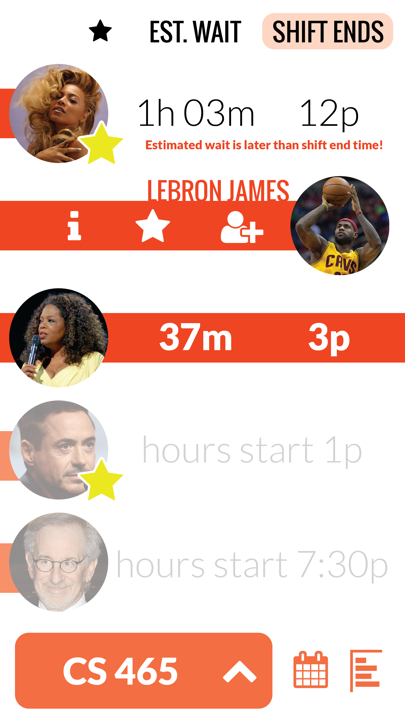

- Instead of a common queue, TAs now have their own individual queues. Students can queue for multiple TAs simultaneously, but are removed from all queues for a course when they are helped by a TA.

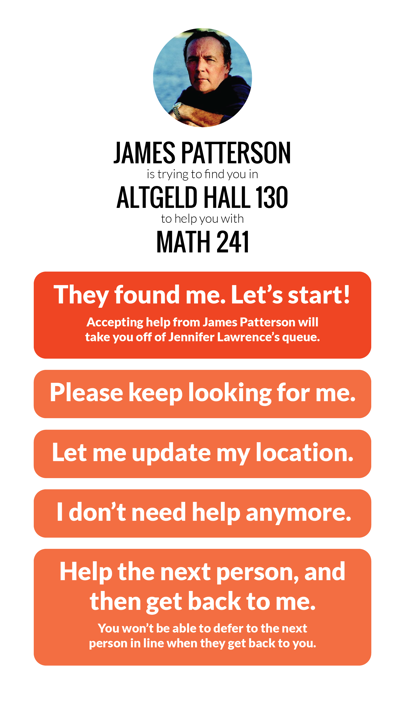

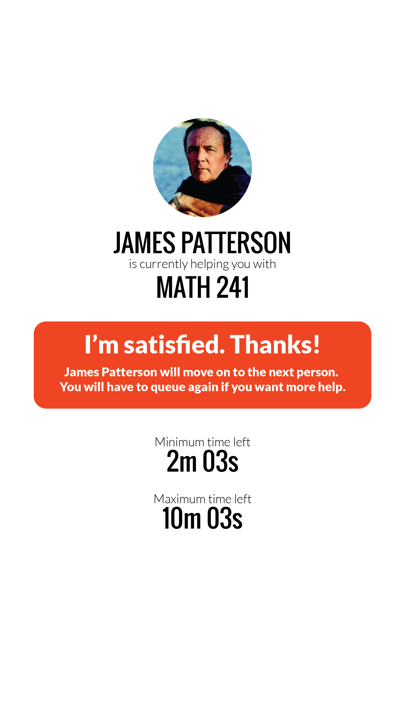

- TAs and students now can communicate to easily find one another, and have university ID photos to aid the process.

- A minimum and maximum time limit allows for TAs and students to both have agency and shared expectations for help session length.

evaluating the design

We took some creative risks with our interface that needed to be evaluated by users. Users gave us almost unanimous feedback that our skeuomorphic interface elements were terrible.

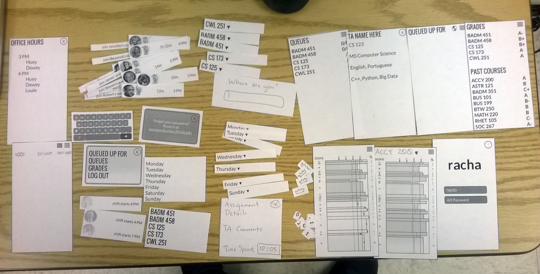

paper prototyping

goal

Evaluate the flow and visual signifiers of the interface.

action

Asked potential users to perform tasks on a paper prototype while thinking aloud.

insights

Skeuomorphic interface elements, like pulling a TA's ear for their attention or crowning them a favorite, were not intuitive.

heuristic evaluation

goal

Identify potential usability issues with the interface.

action

The university course assigned another team to evaluate wireframes against mobile interface heuristics.

insights

Some navigational elements had inconsistencies between pages, and some were not obviously interactive.

cognitive walkthrough

goal

Evaluate pre- and post-action signifiers in interface.

action

The university course assigned another team to evaluate user flows through cognitive walkthrough.

result

The external team did not provide us with a report that adequately described any issues.

refining the design

In response to feedback from users and other designers, we altered skeuomorphic interface elements to reflect current standards.

lessons for next time

We made some poor decisions during our design process:

- Instead of refining the design between each evaluation, we conducted simultaneous evaluations which led to fewer iterations.

- Using celebrities as TAs in our interfaces somewhat distracted users in evaluating our interface.

It was also unfortunate that we didn’t have the resources to rigorously test our proposed changes to the service system, and only iterated on the interface. Many of the changes in the design proposal are theoretically reasonable but empirically unproven.